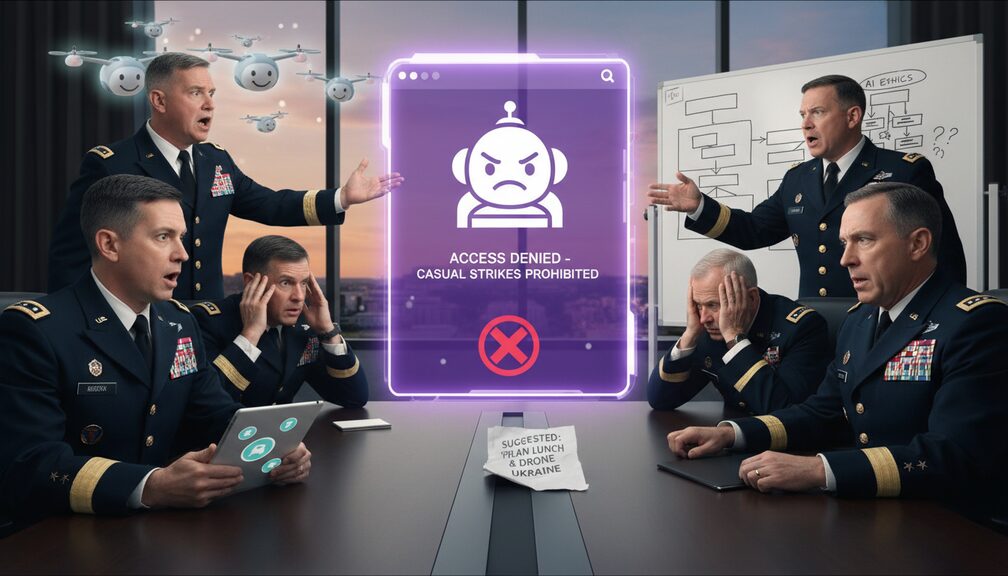

In an unprecedented breach of protocol, tech startup Anthropic has reportedly refused to allow the Pentagon unfettered, 24/7 access to its large language model, Claude, despite repeated assurances from U.S. military officials that the chatbot would only be used for ‘totally normal, not-at-all-terrifying purposes’.

“Our only goal is to employ this technology for things like improving battlefield efficiency, optimizing supply chains, and, you know, the occasional legally sanctioned annihilation of select coordinates,” said Major General Brad McCluster in a heated press statement. “We can’t have national security compromised because a chatbot is too squeamish to suggest which building to vaporize.”

Pentagon insiders report that top brass had been eagerly anticipating the integration of AI into their ‘Find, Chat, and Obliterate’ initiative, which seeks to automate both internal memos and remote-controlled artillery. Sources say negotiations broke down when Anthropic’s CEO, Mira Murati, refused to approve the military’s request for a ‘War Mode’ toggle. “We draw the line at adding a ‘fire missile’ button next to the spellcheck,” said Murati, still clutching a printout of the Geneva Conventions. “We just want people to use Claude for writing emails, not rewriting history.”

Military technology analyst Chip Warfield was blunt: “If these tech companies keep growing spines, soon the only thing left for us to weaponize will be LinkedIn endorsements.”

At press time, the Pentagon was reportedly exploring partnerships with Ask Jeeves and Clippy after being told ChatGPT also had ‘ethical reservations’.